Pulp: Native GPU UI for Audio Plugins and Apps, with React + AI Design Import

I’ve been wondering what it would look like to build modern audio software in a world where AI agents exist without turning the whole thing into a soulless plugin factory.

For the past month I’ve been building an open-source framework called Pulp: a cross-platform, GPU-rendered, native-first framework for audio plugins and interactive creative tools.

Pulp targets standalone apps across macOS, Windows, Linux, iOS, and Android, along with plugin formats like VST3, AUv2, AUv3, CLAP, LV2, WAM, WebCLAP, and optionally AAX.

The core idea is simple:

I wanted browser-level capabilities and hot reloading without shipping a browser.

Most modern UI tooling assumes one of two things:

- you’re building a web app

- you’re embedding a WebView inside a native shell

Pulp takes a different approach.

It renders natively using Skia and modern GPU backends (Metal, Vulkan, D3D12 via Dawn/WebGPU), while exposing a React-style development model and compatibility layers for modern design tooling.

Interfaces can come from places like:

- React apps

- AI-generated HTML/React (from Claude Design)

- Figma MCP exports

- v0.app

- Google Stitch

- Pencil.dev

- handwritten Pulp code

…but they all execute as native GPU-rendered UI.

A big part of the thesis here is that web development workflows are actually incredibly powerful for creative tooling:

- fast iteration

- hot reload

- design-tool interoperability

- live experimentation

- shared component ecosystems

I was inspired by a lot of interesting projects over the last few years, but I kept feeling like there was still room for a system that treated native rendering, creative performance, and modern authoring workflows as first-class ideas simultaneously.

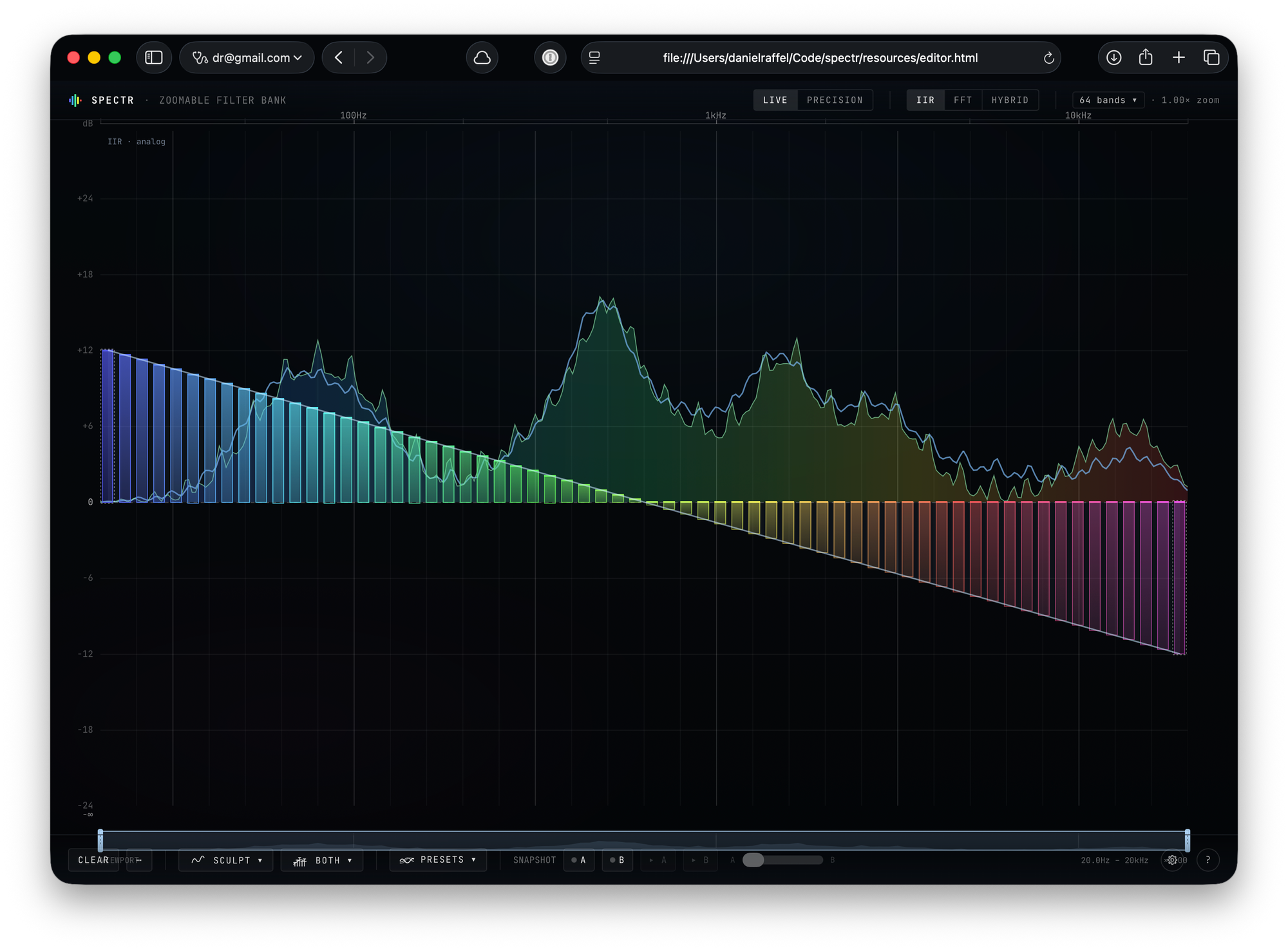

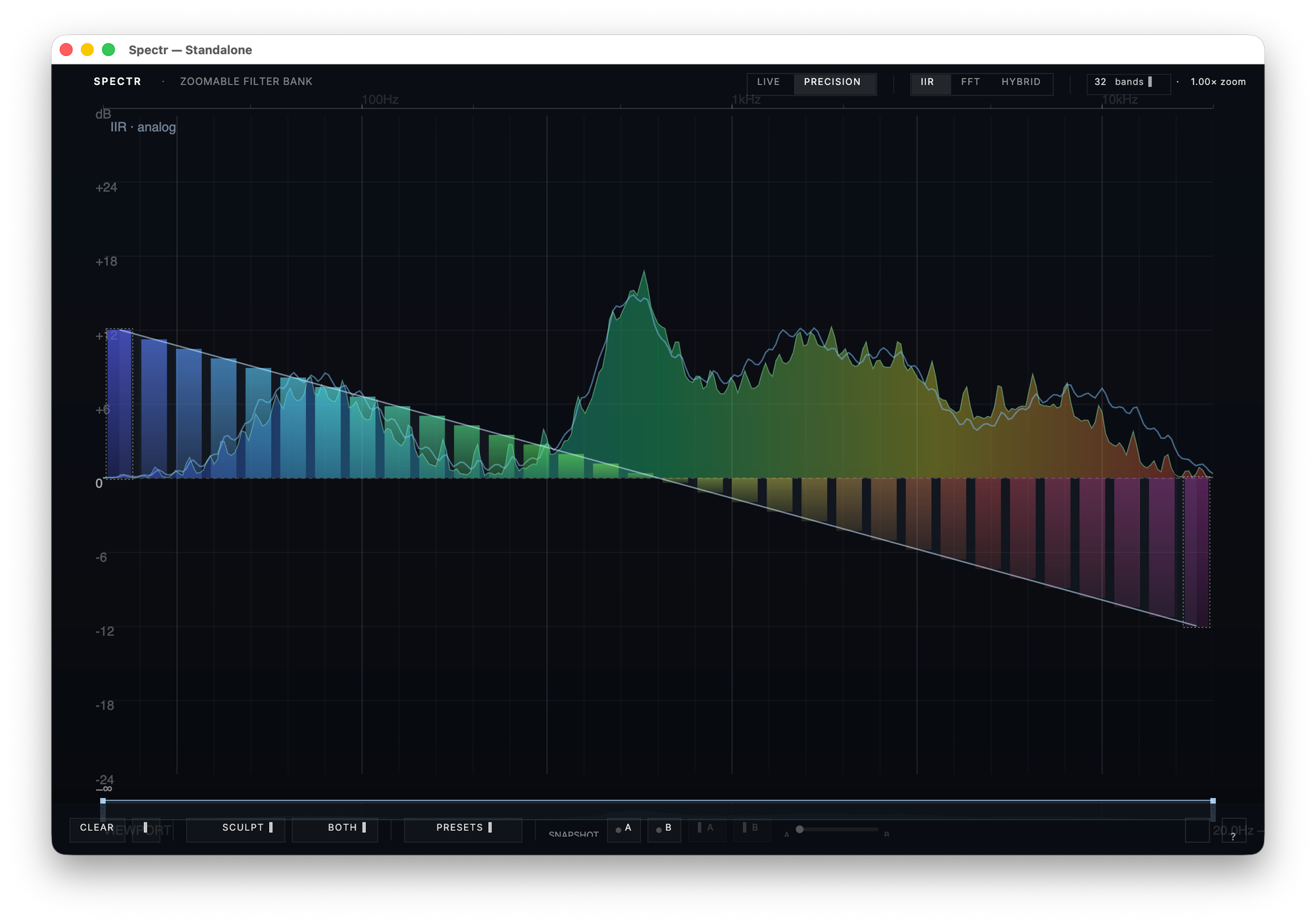

While building Pulp, I started using a plugin concept called Spectr to pressure-test the architecture.

I designed an intentionally ambitious React-based plugin UI in Claude Design and used it to see whether Pulp could actually support a highly dynamic interface running inside a real native audio plugin without embedding a browser anywhere in the stack.

That ended up exercising a lot of the harder parts of the system:

- native GPU rendering

- React reconciliation

- hot reload

- runtime UI execution

- bridging modern web-style workflows into a native plugin environment

Spectr is still evolving as an actual plugin, but it’s been a useful testbed for forcing the framework to solve real design problems instead of staying theoretical.

The problem

Creative-tool UI is currently stuck between two unsatisfying defaults.

On one side, you have native UI toolkits like JUCE, iPlug2, Qt, and AppKit. They’re fast, powerful, and ship cleanly inside a host process, but the authoring model is still far behind the modern web.

On the other side, you have web stacks like React, Tailwind, Figma Make, and other AI-assisted design tools. The authoring experience is dramatically better, but shipping that into a plugin or native app usually means embedding a browser runtime somewhere in the stack.

I wanted both:

native rendering, native lifecycle, and native plugin behavior — with the authoring ergonomics of modern web tooling.

Why existing approaches break down

The “embed a WebView” path is the most common workaround, and it has a few well-known failure modes inside audio software:

- WebViews are heavy. A typical plugin instance loads a Chromium-class runtime per editor window.

- DAWs are not browsers. They open and close editor windows aggressively, share GPU contexts in unusual ways, and have their own input/event quirks.

- The native ↔ JS boundary becomes a serialization bottleneck.

- You inherit the browser’s sandbox, threading model, and update cadence — which rarely match real-time audio software.

The other common workaround is generating native code from a design tool once and maintaining it forever by hand. That works, but it kills the iteration loop. The moment the design changes, the native code is stale.

Pulp’s bet is that you don’t actually have to pick one of those tradeoffs.

Two execution models

One interesting thing that emerged while building Pulp is that there are at least two valid ways to bridge modern UI tooling into a native application.

They serve different needs, and Pulp supports both under one architecture.

One important detail: neither path renders HTML or CSS at runtime.

Both paths ultimately converge on the same native back end:

Yoga handles layout with CSS Flexbox + Grid semantics — no block flow, floats, table layout, or multi-column. Skia paints into Metal, Vulkan, and D3D12 surfaces via Dawn/WebGPU.

HTML and CSS exist as authoring inputs and mental models — not as runtime technologies.

What changes between the two paths is when the conversion into that native view/rendering system happens.

Path A: Design-to-native (compile-time)

This is the import pipeline.

A design or generated UI is imported once and converted into native Pulp TSX/components.

Inputs:

- Figma exports

- Claude-generated HTML

- v0 output

- Stitch

- Pencil

Outputs:

- editable TSX

- design tokens

- bridge scaffolding

- classname mappings

Under the hood, the importer walks the source structure, identifies semantic widgets and layout containers, and translates style declarations into Yoga layout properties and native paint state.

The generated TSX constructs Pulp Views directly.

After import, the generated code becomes part of your application and can be edited, refactored, and shipped like any other source file.

At runtime:

- no HTML parser

- no CSS engine

- no browser runtime

- no embedded JS engine

Just native Pulp Views feeding Yoga and Skia.

Advantages:

- smaller binaries

- faster startup

- easier native debugging

- fully native ownership of the UI stack

Tradeoff:

- re-import is required when the upstream design changes

This path works well for static or semi-static interfaces where startup time, binary size, and native ownership matter most.

Path B: Live runtime

This is the “React bundle is the app” path.

Instead of converting ahead of time, Pulp runs a React bundle directly inside an embedded JS runtime and maps React reconciliation onto native GPU-rendered views in real time.

Your React source remains the source of truth.

State lives in React.

Updates happen live.

The current Spectr prototype runs this way today.

The original Spectr interface was designed in Claude Design and exported as a self-contained React bundle. That same React source now runs natively inside a C++ audio plugin through Pulp’s runtime bridge, with no DOM, no WebView, and no browser process anywhere in the stack.

Under the hood:

- the React app is bundled into a single JS file

- Pulp embeds QuickJS and loads that bundle at startup

- before React runs, Pulp installs a thin DOM-style shim layer

React still believes it’s talking to a browser:

- document.createElement(…)

- element.style.flexDirection = ‘row’

- appendChild(…)

- reconciliation updates

…but every one of those calls maps onto native Pulp Views instead of real DOM nodes.

Style assignments become Yoga layout declarations and Skia paint state directly.

Each reconciliation pass updates the native Pulp view tree, Yoga computes layout, and Skia paints the result through the GPU backend.

So while the React ecosystem thinks it’s targeting the web, the actual runtime remains fully native from top to bottom.

Advantages:

- full React interactivity

- compatibility with much of the existing React ecosystem

- extremely fast iteration loops

- hot reload against native rendering

Tradeoff:

- runtime JS overhead

- more moving parts in the runtime bridge

- larger binaries because the JS engine ships with the plugin

What about the rest of the web ecosystem?

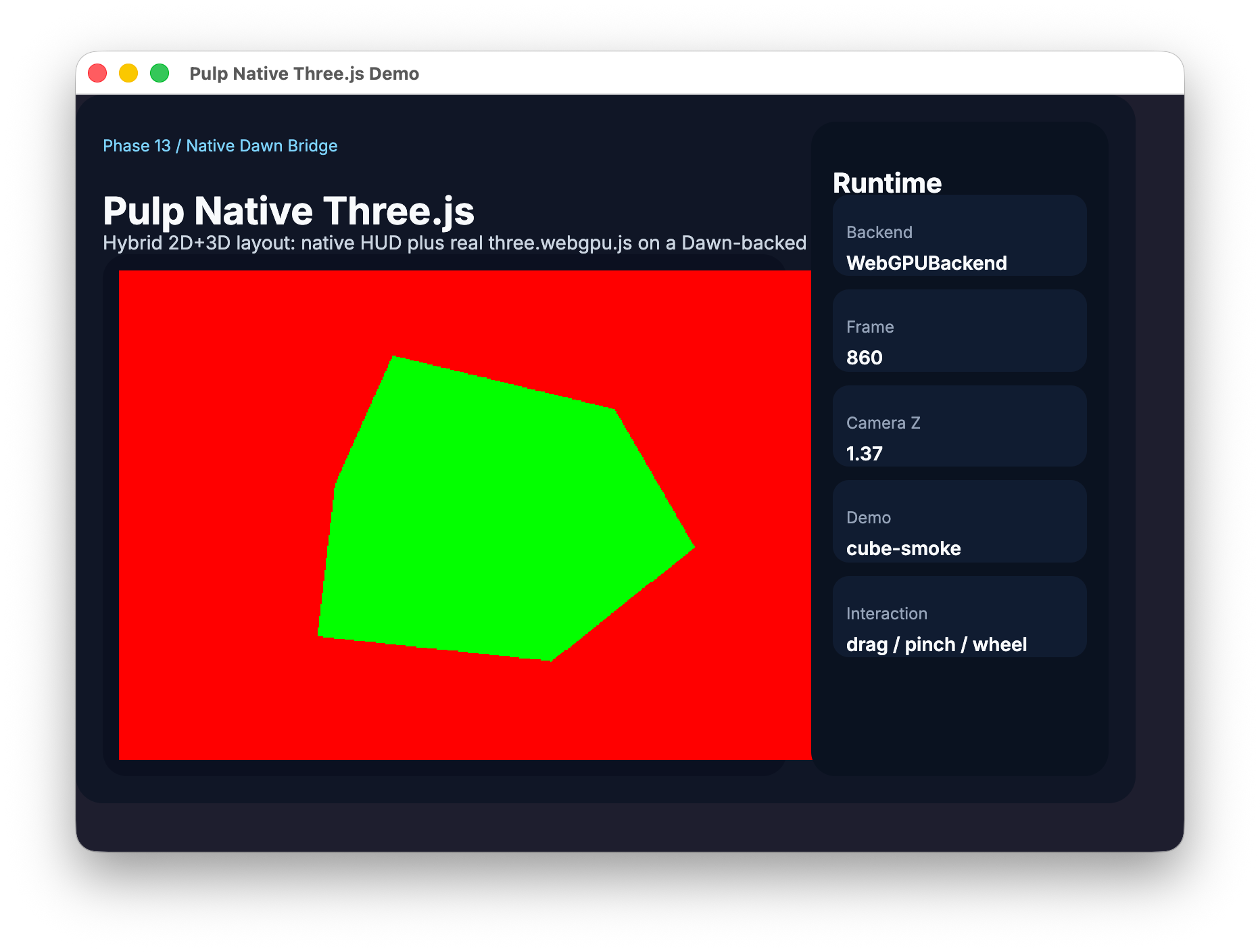

To pressure-test the runtime bridge with something heavier than a plugin UI, I also got Three.js running through the same path.

Specifically, the three.webgpu.js renderer talks directly to Pulp’s Dawn-backed WebGPU surface:

- full 3D scene graphs

- materials

- animation

- GPU rendering at frame rate

…all inside a native app with no browser involved anywhere in the stack.

That was an important signal that the runtime model can support ambitious renderer-oriented web ecosystems, not just plugin chrome and control panels.

A natural follow-up question is:

“Could you run something like jQuery on this?”

The honest answer is: partially.

Basic DOM-style operations — traversing nodes, mutating elements, assigning classes/styles — can route through the shim layer. But browser platform APIs like:

- XMLHttpRequest

- fetch

- browser navigation

- localStorage

- cookies

- the traditional page lifecycle

…aren’t really the target.

More importantly, jQuery is fundamentally the wrong shape for what Pulp is trying to do.

The libraries that fit well are the ones that think in terms of:

- components

- renderers

- scene graphs

- reconciliation

React is a good fit.

Three.js is a good fit.

Anything designed around a custom renderer or declarative rendering model tends to map well.

The libraries that fit poorly are the ones that assume a traditional browser environment with a full DOM, networking stack, storage layer, and page-oriented runtime underneath them.

Pulp isn’t trying to recreate Chrome.

The goal is to make modern UI and rendering workflows portable into a native runtime without dragging an entire browser engine into audio software.

Why both execution models matter

Some developers want:

“Turn my design into native code and let me own it.”

Others want:

“Let my React app become a native plugin/app runtime.”

Both workflows are legitimate, and they tend to map to different stages of a project.

Early in a design, when the UI is changing daily and experimentation matters most, Path B keeps the loop tight and avoids constant re-import cycles.

Later, once the UI stabilizes and startup time and binary size matter more, Path A lets you bake the interface down into native code you directly control.

The same Pulp project can move between the two over its lifetime without rewriting the application.

That flexibility is what makes the architecture feel different from “yet another UI toolkit.”

The interesting abstraction isn’t really the widget set — it’s the ability to move between execution models without rebuilding your UI architecture from scratch.

Current alpha status

Pulp is in active alpha development.

What’s already working:

- GPU rendering via Skia + Dawn/WebGPU on Metal, Vulkan, and D3D12

- Yoga-based layout with CSS-style semantics

- React reconciliation against the native view tree

- design import pipelines for modern tooling

- native bridge infrastructure

- cross-platform rendering across macOS, Windows, Linux, iOS, and Android

What’s still evolving quickly:

- APIs

- tooling

- runtime behavior

- compatibility layers

- documentation

As of May 2026, Pulp definitely isn’t ready for outside developers to build production software on yet, and it’s still too early for me to encourage people to seriously kick the tires. There’s still a substantial amount of cleanup needed across the docs, internal APIs, tooling, and the rough edges of the developer experience.

But it’s under active development, and the architecture has started crossing the line from “idea” into something tangible and working. It’s become a large enough part of my life that I wanted to start talking about it publicly instead of waiting for everything to feel polished.

One thing I haven’t gone into is how AI agents fit into all of this.

A large part of Pulp’s architecture was shaped by the idea that creative tooling is increasingly going to be built alongside systems like Claude, Codex, MCP-connected tools, and other agent-driven workflows — not just traditional editors and IDEs.

A lot of the import pipeline, runtime architecture, and hot-reload workflow starts looking different when you assume the “developer” might partially be an autonomous system iterating on UI, rendering, layout, and interaction loops in real time.

That’s worth a separate post on its own. In the meantime, I think native creative tooling is about to get very interesting.

Take a peek

Pulp is MIT licensed and lives here: Pulp on GitHub

Feedback, experiments, contributors, and bug reports are welcome.